With COVID-19 drastically altering daily life for people across the planet, the U.S. Department of Energy’s (DOE) Argonne National Laboratory has moved quickly to join the global fight against the pandemic. Among the laboratory’s most powerful resources for scientific research is the supercomputer Theta, housed at the Argonne Leadership Computing Facility (ALCF), a DOE Office of Science User Facility. Over 250 nodes on the machine were immediately reserved for multipronged research into the disease.

Led by Argonne computational scientist Jonathan Ozik and Argonne Distinguished Fellow Charles (Chick) Macal, one of these branches of research oversees the development of epidemiological models to simulate the spread of COVID-19 throughout the population.

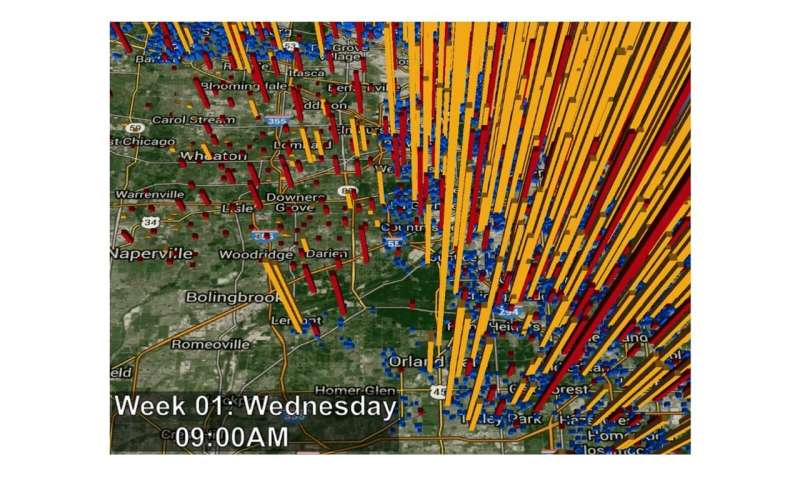

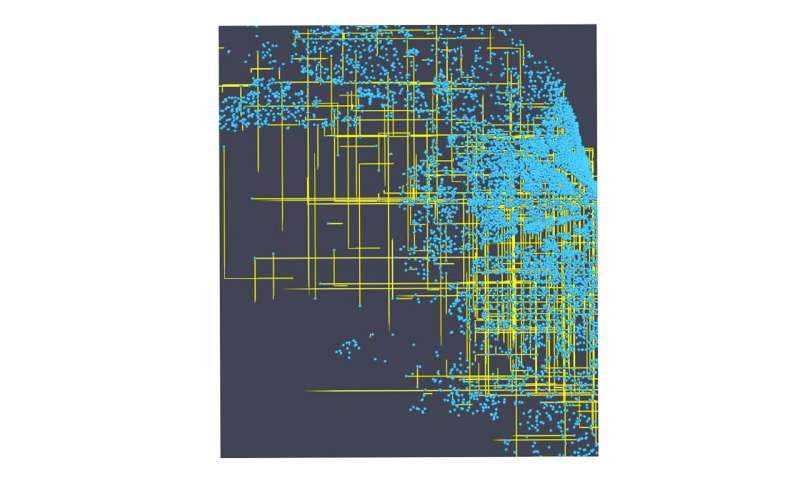

The models are city-scale simulations of Chicago, populated with just under 3 million agents that represent individuals going about their daily schedules and navigating some 1.2 million sites (houses, schools, workplaces, and so on) that each present possibilities for them to meet, or colocate—that is, possibilities for exposure. Following exposure, an agent can become infected in a severe manner, depending on an agent’s profile, which includes age characteristics. A certain number of the infected agents then perish.

These models—running for a simulated year—are revised and improved on a daily basis, in accordance with the most up-to-date data and information. These updates are moving toward a completely automated workflow.

“The workflow ingests updated epidemiological data—for instance, that published daily by the Chicago Department of Public Health—which serve as empirical target trajectories. By comparing these with outputs generated from ensemble model runs, we are able to estimate the pandemic’s underlying parameters,” Ozik said. “It is these calibrated parameters that enable us to run different scenarios with the model.”

“This is the most detailed granular simulation of COVID-19 that exists right now in terms of modeling individuals who could be in various disease states, including infectious or hospitalized,” Macal said.

The models pursue lines of inquiry that will be familiar to anyone following the virus in the news media—for example, the difference in outcome yielded by implementing social distancing measures for however many additional days or weeks.

“What are good ways to ease off the social distancing measures?” Ozik asked. “Everybody’s interested in that for very obvious reasons, but we don’t want to do something that will just create another calamity a few months down the road.”

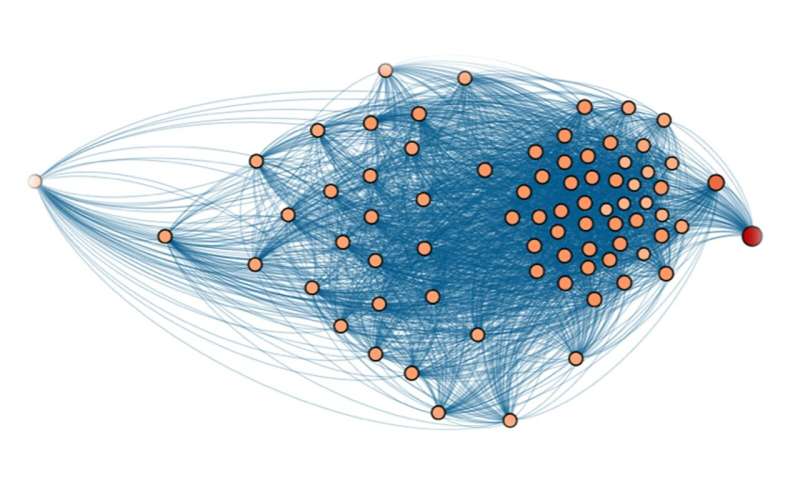

The significant computational demands of the project result from the models’ stochastic (randomly determined) components, which govern the underlying uncertainties and parameters of the simulation. These parameters govern agent behaviors, as well as disease progression dynamics and transmissibility. Within the model, transmissibility encapsulates the likelihood that a susceptible agent is infected, based on the amount of time that two agents spend together.

“With this model, you have potentially many people interacting in many different ways: some might be infected, some might be susceptible, and they mix in different proportions in a variety of different locations—there are different locations like schools and workplaces where very different parts of the population interface,” Ozik explained. “The multitude of possibilities the model presents make it quite qualitatively different from—and quantitatively more complex than—a statistical model or more simplified compartmental models, which are much faster to run.”

With optimization assistance from ALCF staff, simulation runs on Theta have utilized more than 800 nodes at once. As part of the automated workflow, following these simulation runs, output data are transferred to Petrel (a service provided by Argonne and Globus, a University of Chicago-run non-profit committed to data management) for archival storage and post-processing; this post-processing is completed on Bebop, a high-performance computing cluster operated by Argonne’s Laboratory Computing Resource Center that the team also leverages for simulation runs.

Source: Read Full Article